- 🔬 Data Science

- 🥠 Deep Learning and Object Detection

Introduction

Mussels are aquatic animals with bivalved hard shells that are consumed by millions of people around the world. Mussels grow in fresh water rivers or lakes near their openings to saline waters of the ocean, as well as in some coastal intertidal regions. Mussel aquaculture involves floating rafts that have ropes suspended in the water on which the mussels are cultured. The farmers collect mussel seeds from nearby rocky shores during low tides and attach them to the ropes in their rafts. Later, these ropes are covered with mesh socks that collect the mussels once they are ripe.

Spain is one of the biggest producer of mussels in the world. The Galicia region in Spain accounts for almost 90 percent of the mussel aquaculture in the country. Our study area for this notebook is Ria de Arousa, which is the biggest mussel farming area in Galicia region.

Monitoring of such aquacultures is an important aspect of maintaining the aquatic ecosystems. While sustainable farming can help the ecosystem, it's exploitation can degrade the environment quality and biodiversity. Therefore, it is important to monitor the growth of mussel farms in a region and their expansion into fragile areas. While physical surveys can be arduous and time consuming, satellite imagery and deep learning can help in monitoring mussel farming with much less effort. These analysis can also be helpful in comparing changes over longer periods of time.

In this notebook, we will train a deep learning model to detect mussel farms in high-resolution imagery of the Ria De Arousa region of Spain.

Export training data

from arcgis.gis import GIS# Connect to GIS

gis = GIS('home')The following imagery layer contains high resolution imagery of a part of the Ria De Arousa region. The spatial resolution of the imagery is 30 cm, and it contains 3 bands: Red, Green, and Blue. It is used as the 'Input Raster' for exporting the training data.

training_raster = gis.content.get('f2b92eed10394e5eb3c7f135861937d9')

training_rasterThe following feature layer contains the bounding boxes for a few mussel farms in the Ria de Arousa region. It is used as the 'Input Feature Class' for exporting the training data.

training_feature_layer = gis.content.get('ff6a48b3391c4a24b807af0eb08bb6c1')

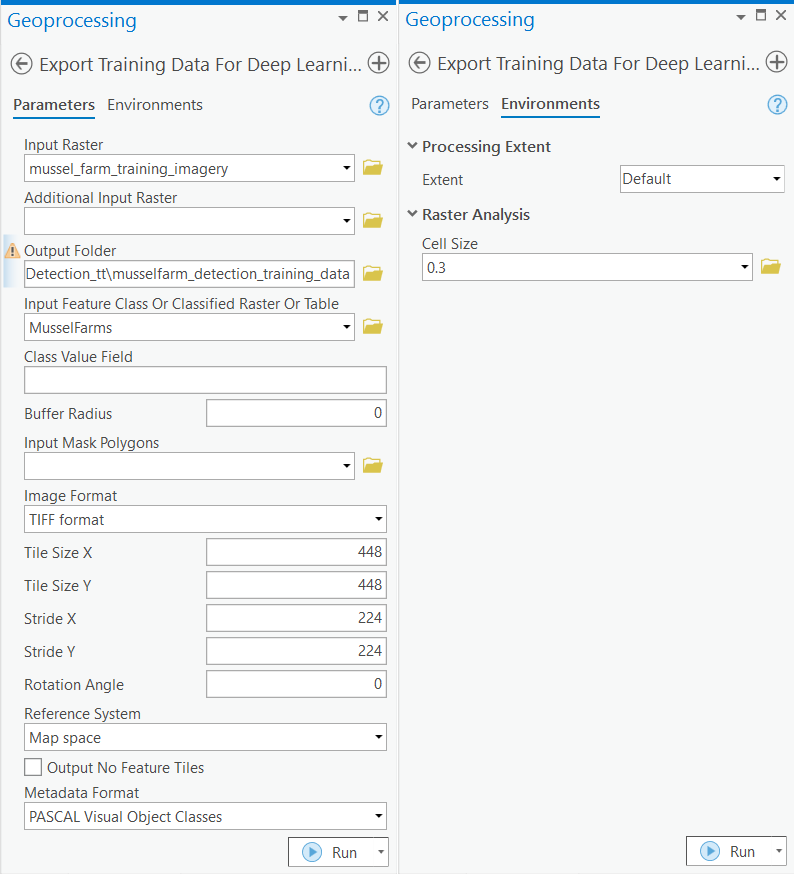

training_feature_layerTraining data can be exported by using the 'Export Training Data For Deep Learning' tool available in ArcGIS Pro and ArcGIS Enterprise. For this example, we prepared the training data in the 'PASCAL Visual Object Classes' format, using a 'chip_size' of 448px and a 'cell_size' of 0.3m, in ArcGIS Pro. The 'Input Raster' and the 'Input Feature Class' have been made available to export the required training data. We have also provided the exported training data in the next section, if you wish to skip this step.

Train the model

Necessary imports

import os

import glob

import zipfile

from pathlib import Path

from arcgis.learn import prepare_data, MMDetectionGet training data

We have already exported the data that can be directly used by following the steps below:

training_data = gis.content.get('57cb821dedca4c5598e81c8d2d510c91')

training_datafilepath = training_data.download(file_name=training_data.name)# Unzip training data

with zipfile.ZipFile(filepath, 'r') as zip_ref:

zip_ref.extractall(Path(filepath).parent)data_path = Path(os.path.join(os.path.splitext(filepath)[0]))Prepare data

We will specify the path to our training data and a few hyperparameters.

path: path of the folder/list of folders containing training data.batch_size: Number of images your model will train on each step inside an epoch. Depends on the memory of your graphic card.chip_size: The same as the tile size used while exporting the dataset.

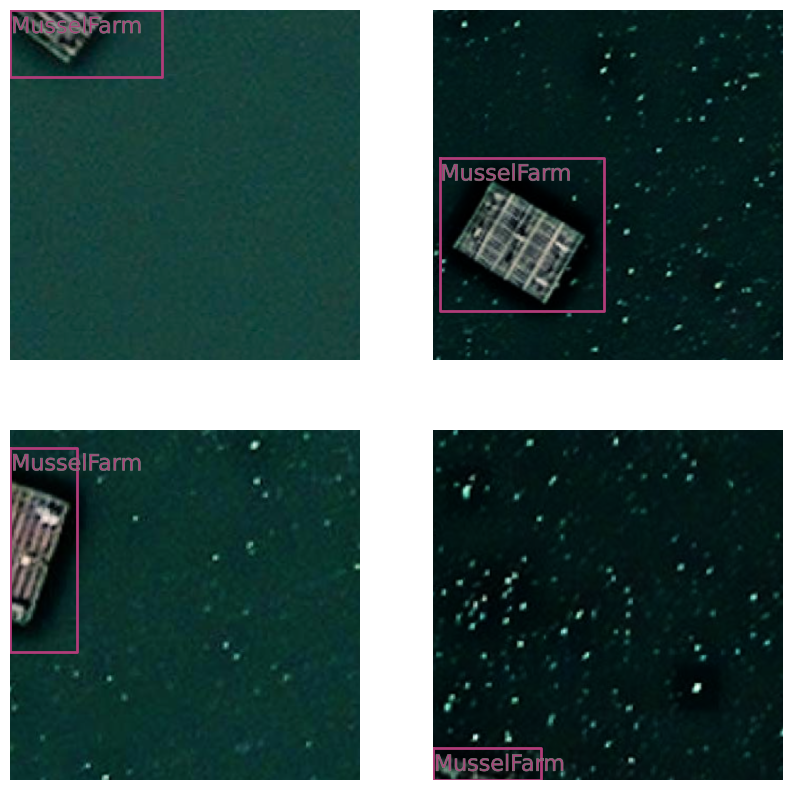

data = prepare_data(data_path, batch_size=4, chip_size=448)Visualize training data

To get a sense of what the training data looks like, the show_batch() method will randomly pick a few training chips and visualize them.

data.show_batch(rows=2)

Load model architecture

Through the integration of the MMDetection library, arcgis.learn allows the use of the Dynamic RCNN model, along with many other models. For more in-depth information on how to use MMDetection, please see Use MMDetection with arcgis.learn.

MMDetection.supported_models['atss', 'carafe', 'cascade_rcnn', 'cascade_rpn', 'dcn', 'detectors', 'dino', 'double_heads', 'dynamic_rcnn', 'empirical_attention', 'fcos', 'foveabox', 'fsaf', 'ghm', 'hrnet', 'libra_rcnn', 'nas_fcos', 'pafpn', 'pisa', 'regnet', 'reppoints', 'res2net', 'sabl', 'vfnet']

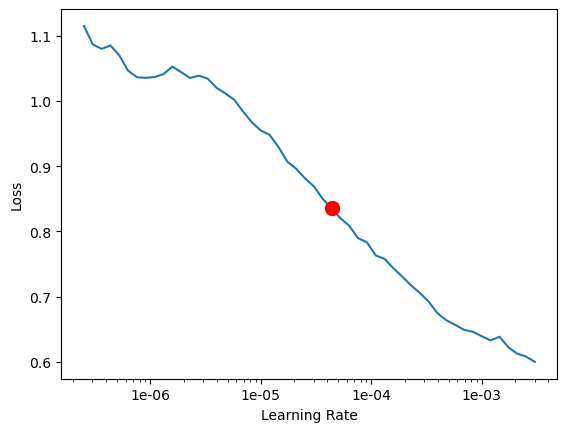

model = MMDetection(data, 'dynamic_rcnn')Find an optimal learning rate

Learning rate is one of the most important hyperparameters in model training. The ArcGIS API for Python provides a learning rate finder that automatically chooses the optimal learning rate for you.

lr = model.lr_find()

lr

4.365158322401661e-05

Fit the model

Next, we will train the model for a few epochs with the learning rate found above. Given the small size of the training dataset, we can train the model for 10 epochs.

model.fit(10, lr=lr)| epoch | train_loss | valid_loss | average_precision | time |

|---|---|---|---|---|

| 0 | 0.657232 | 0.551822 | 0.746600 | 01:06 |

| 1 | 0.412948 | 0.421975 | 0.825002 | 01:06 |

| 2 | 0.346688 | 0.336524 | 0.847761 | 01:07 |

| 3 | 0.305406 | 0.351150 | 0.849114 | 01:06 |

| 4 | 0.301727 | 0.347651 | 0.850602 | 01:06 |

| 5 | 0.284532 | 0.346730 | 0.866180 | 01:06 |

| 6 | 0.277933 | 0.309670 | 0.858802 | 01:06 |

| 7 | 0.277370 | 0.293810 | 0.883472 | 01:05 |

| 8 | 0.253609 | 0.284540 | 0.883564 | 01:06 |

| 9 | 0.285145 | 0.277822 | 0.891327 | 01:08 |

As we can see, the training and validation losses were decreasing until the 9th epoch, before increasing slightly in the last epoch. As such, there could be room for more training.

Visualize results in validation set

It is a good practice to see the results of the model viz-a-viz ground truth. The code below picks random samples and shows us ground truth and model predictions, side by side. This enables us to preview the results of the model we trained.

model.show_results()

Accuracy assessment

arcgis.learn provides the average_precision_score() method that computes the average precision of the model on the validation set for each class.

model.average_precision_score(){'MusselFarm': 0.8913268297731172}Save the model

We will save the trained model as a 'Deep Learning Package' ('.dlpk' format). The Deep Learning package is the standard format used to deploy deep learning models on the ArcGIS platform.

We will use the save() method to save the trained model. By default, it will be saved to the 'models' sub-folder within our training data folder.

model.save('musselfarms_mmd_dynamic_rcnn_10ep')Computing model metrics...

WindowsPath('~/AppData/Local/Temp/musselfarm_detection_training_data/models/musselfarms_mmd_dynamic_rcnn_10ep')Deploy the model and detect mussel farms

We can now use the saved model to detect mussel farms in the entire Ria De Arousa region. Here, we have provided only a sample raster for Ria De Arousa. The following imagery layer contains high resolution imagery of a part of the Ria De Arousa region. The spatial resolution of the imagery is 30 cm and contains 3 bands: Red, Green, and Blue.

#get raster data using item id

sample_inference_raster = gis.content.get('d6f035f5de504c86855e0ee70e83ad0e')

sample_inference_rasterModel builder

To save time and resources, we will only run the model on the water body and not the surrounding land masses, as the mussel farms are only found in water. To extract a raster with only the water body, we created a water mask using the Sentinel-2 Views NDWI. The following model builder can be used to create a water mask and detect the mussel farms.

model_builder = gis.content.get('2647a386f5a04917b74cc4f40a48f57f')

model_builderTools used:

- Copy Raster: Copies a region of the Sentinel-2 Views NDWI hosted imagery and creates a subset to be processed further.

- Greater Than Equal: Creates a binary raster with water pixels as 1 and others as 0.

- Reclassify: Reclassifies the other pixels (value 0) in the binary raster as 'No Data'.

- Raster to Polygon: Converts the raster into a feature layer with polygons representing the water mask.

- Fill Gaps: Fills gaps smaller than 1500 square meters (approximate maximum area of a mussel farm) in the water mask.

- Extract By Mask: Uses the water mask to clip the original raster and create a new raster containing only the water body.

- Detect Objects Using Deep learning: Detects mussel farms on the clipped raster using the model we trained earlier.

Model Builder Diagram:

We can use the following step if we want to detect mussel farms in any given imagery containing only the water body, without running the model builder. In this step, we will generate a feature layer with detected mussel farms using the 'Detect Objects Using Deep Learning' tool available in both ArcGIS Pro and ArcGIS Enterprise.

Results

The model was run on the entire Ria De Arousa region and the results can be viewed here.

#get feature layer using item id

fc = gis.content.get('477d756f79e4400fa91c5b220406d98c')

fc#get webmap using item id

wm_item = gis.content.get('36714d14f80649e99aaf702f3cec6455')

wm_item#Create map object with webmap layer

mapp = gis.map(wm_item)

# Add feature layer over map

mapp.content.add(fc)

# Zoom to the feature layer extent

mapp.zoom_to_layer(fc)

# Display map

mapp

Conclusion

In this notebook, we saw how we can use deep learning and high-resolution satellite imagery to detect mussel farms. This can be an important task for monitoring and conservation purposes. We used a small sample as training data with one of the object detection models available through the MMDetection integration in arcgis.learn. We trained the deep learning model for a few iterations and then deployed it to detect all the mussel farms in the Ria De Arousa region. To save time and resources we used a model builder that clipped out a raster containing only the water body. This clipped raster was used to detect the farms. The results are highly accurate and almost all the mussel farms in the region have been detected.