- 🔬 Data Science

- 🥠 Deep Learning and Object Detection

Prerequisites

- Please refer to the prerequisites section in our guide for more information. This sample demonstrates how to do export training data and model inference using ArcGIS Image Server. Alternatively, they can be done using ArcGIS Pro as well.

- If you have already exported training samples using ArcGIS Pro, you can jump straight to the training section. The saved model can also be imported into ArcGIS Pro directly.

Introduction and objective

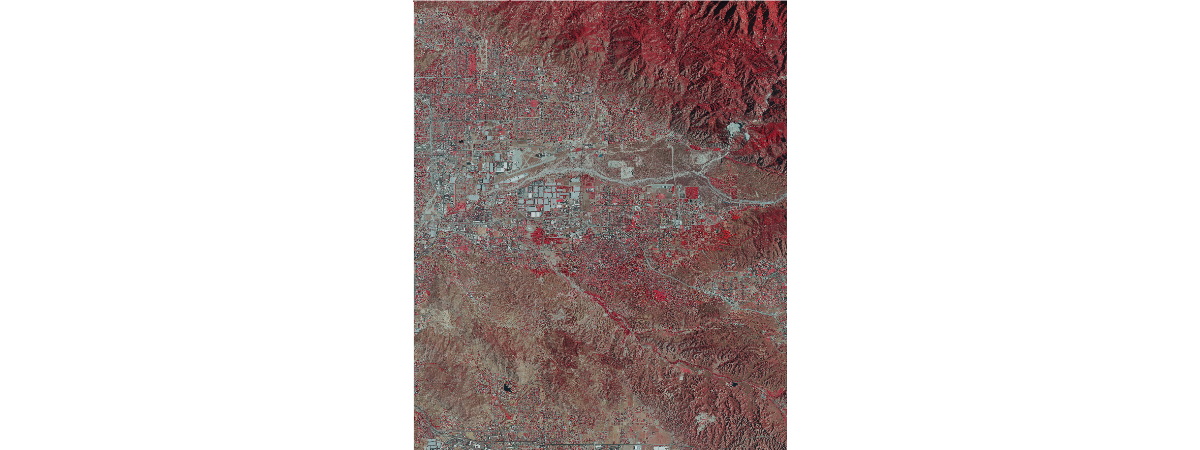

Deep Learning has achieved great success with state of the art results, but taking it to the field and solving real-world problems is still a challenge. Integration of the latest research in AI with ArcGIS opens up a world of opportunities. This notebook demonstrates an end-to-end deep learning workflow in using ArcGIS API for Python. The workflow consists of three major steps: (1) extracting training data, (2) train a deep learning object detection model, (3) deploy the model for inference and create maps. To better illustrate this process, we choose detecting swmming pools in Redlands, CA using remote sensing imagery.

Part 1 - export training data for deep learning

Import ArcGIS API for Python and get connected to your GIS

import arcgis

import sys

from arcgis import GIS, learn

from arcgis.raster import analytics

from arcgis.raster.functions import extract_band, apply, clip

from arcgis.raster.analytics import list_datastore_content

from arcgis.learn import SingleShotDetector, prepare_data, Model, list_models, detect_objects

arcgis.env.verbose = True

from arcgis.geocoding import geocodegis = GIS('https://pythonapi.playground.esri.com/portal', 'arcgis_python', 'amazing_arcgis_123')Prepare data that will be used for training data export

To export training data, we need a labeled feature class that contains the bounding box for each object, and a raster layer that contains all the pixels and band information. In this swimming pool detection case, we have created feature class by hand labelling the bounding box of each swimming pool in Redlands using ArcGIS Pro and USA NAIP Imagery: Color Infrared as raster data.

pool_bb = gis.content.get('3443553a9e8443eeb570ef1b973e44a8')

pool_bbpool_bb_layer = pool_bb.layers[0]

pool_bb_layer.url'https://pythonapi.playground.esri.com/server/rest/services/Hosted/SwimmingPoolLabels/FeatureServer/0'

m = gis.map("Prospect Park, Redlands, CA")

m

m.basemap = 'gray'Now let's retrieve the NAIP image layer.

naip_item = gis.content.get('26836cf06f034854998ccedef1772e85')

naip_itemnaiplayer = naip_item.layers[0]

naiplayer

m.add_layer(naiplayer)Specify a folder name in raster store that will be used to store our training data

Make sure a raster store is ready on your raster analytics image server. This is where where the output subimages, also called chips, labels and metadata files are going to be stored.

ds = analytics.get_datastores(gis=gis)

ds<DatastoreManager for https://pythonapi.playground.esri.com/ra/admin>

ds.search()[<Datastore title:"/fileShares/ListDatastoreContent" type:"folder">, <Datastore title:"/rasterStores/RasterDataStore" type:"rasterStore">]

rasterstore = ds.get("/rasterStores/RasterDataStore")

rasterstore<Datastore title:"/rasterStores/RasterDataStore" type:"rasterStore">

samplefolder = "pool_chips_test"

samplefolder'pool_chips_test'

Export training data using arcgis.learn

With the feature class and raster layer, we are now ready to export training data using the export_training_data() method in arcgis.learn module. In addtion to feature class, raster layer, and output folder, we also need to speficy a few other parameters such as tile_size (size of the image chips), strid_size (distance to move in the X when creating the next image chip), chip_format (TIFF, PNG, or JPEG), metadata format (how we are going to store those bounding boxes). More detail can be found here.

Depending on the size of your data, tile and stride size, and computing resources, this opertation can take 15mins~2hrs in our experiment. Also, do not re-run it if you already run it once unless you would like to update the setting.

pool_bbpool_bb_layer<FeatureLayer url:"https://datascienceadv.esri.com/server/rest/services/Hosted/SwimmingPoolLabels/FeatureServer/0">

export = learn.export_training_data(input_raster=naiplayer,

output_location=samplefolder,

input_class_data=pool_bb_layer,

chip_format="PNG",

tile_size={"x":448,"y":448},

stride_size={"x":224,"y":224},

metadata_format="PASCAL_VOC_rectangles",

classvalue_field = "Id",

buffer_radius = 6,

context={"startIndex": 0, "exportAllTiles": False},

gis = gis)

exportNow let's get into the raster store and look at what has been generated and exported.

samples = list_datastore_content(rasterstore.datapath + '/' + samplefolder + "/images", filter = "*png")

# print out the first five chips/subimages

samples['/rasterStores/RasterDataStore/pool_chips_test/images'][0:5]Submitted. Executing... Start Time: Thursday, February 21, 2019 9:44:27 PM Running script ListDatastoreContent...

['/rasterStores/LocalRasterStore/pool_chips_yongyao/images/000000000.png', '/rasterStores/LocalRasterStore/pool_chips_yongyao/images/000000001.png', '/rasterStores/LocalRasterStore/pool_chips_yongyao/images/000000002.png', '/rasterStores/LocalRasterStore/pool_chips_yongyao/images/000000003.png', '/rasterStores/LocalRasterStore/pool_chips_yongyao/images/000000004.png']

labels = list_datastore_content(rasterstore.datapath + '/' + samplefolder + "/labels", filter = "*xml")

# print out the labels/bounding boxes for the first five chips

labels['/rasterStores/RasterDataStore/pool_chips_test/labels'][0:5]Start Time: Thursday, February 21, 2019 9:44:29 PM Running script ListDatastoreContent... Completed script ListDatastoreContent... Succeeded at Thursday, February 21, 2019 9:44:29 PM (Elapsed Time: 0.05 seconds)

['/rasterStores/LocalRasterStore/pool_chips_yongyao/labels/000000000.xml', '/rasterStores/LocalRasterStore/pool_chips_yongyao/labels/000000001.xml', '/rasterStores/LocalRasterStore/pool_chips_yongyao/labels/000000002.xml', '/rasterStores/LocalRasterStore/pool_chips_yongyao/labels/000000003.xml', '/rasterStores/LocalRasterStore/pool_chips_yongyao/labels/000000004.xml']

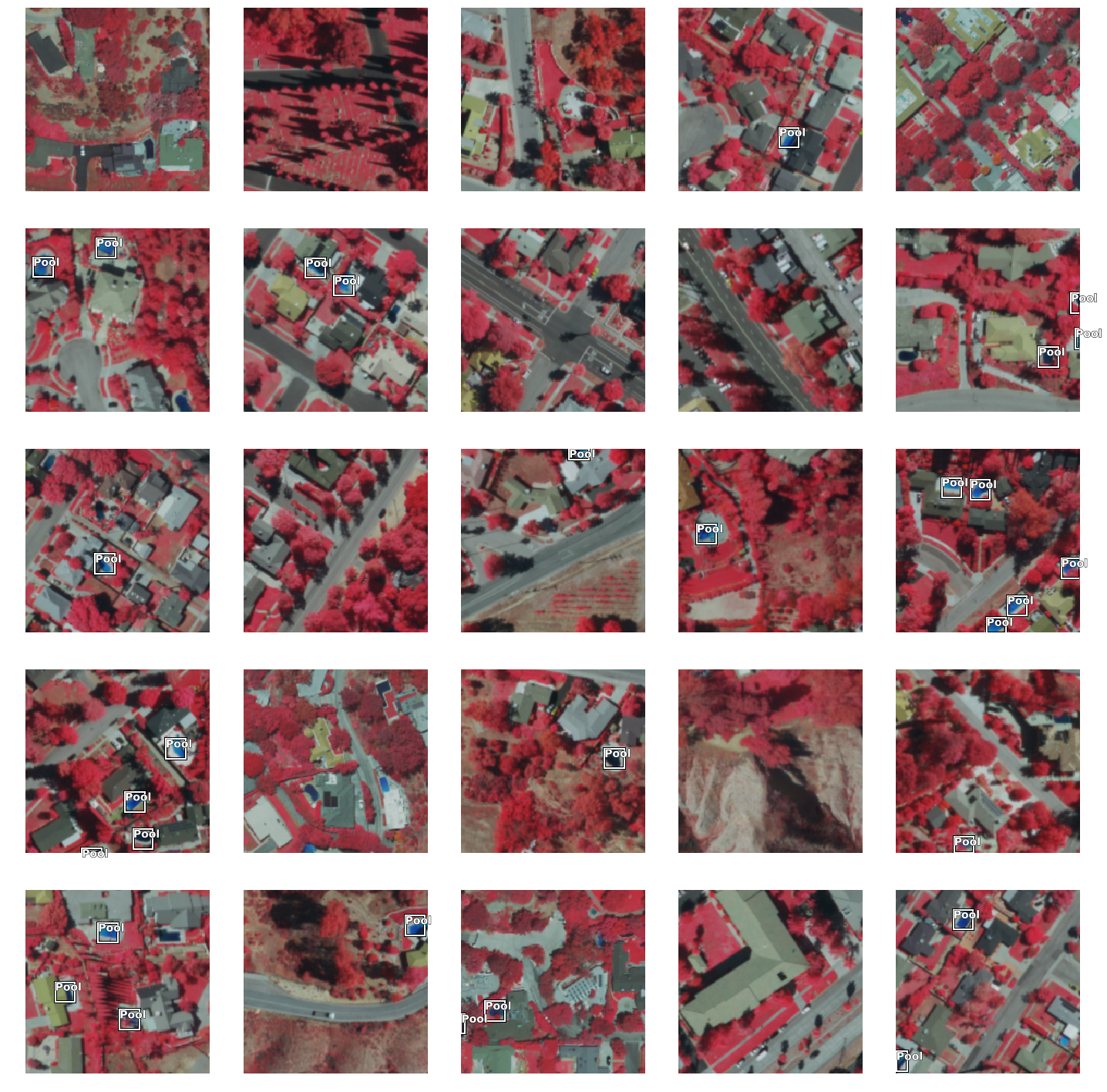

We can also create a image layer using one of this images and look at what it looks like. Note that a chip may or may not have a bounding box in it and one chip might have multiple boxes as well.

Part 2 - model training

If you've already done part 1, you should already have both the training chips and swimming pool labels. Please change the path to your own export training data folder that contains "images" and "labels" folder.

import os

from pathlib import Path

agol_gis = GIS('home')training_data = agol_gis.content.get('73a29df69b344ce8b94fdb4c9df7103d')

training_datafilepath = training_data.download(file_name=training_data.name)import zipfile

with zipfile.ZipFile(filepath, 'r') as zip_ref:

zip_ref.extractall(Path(filepath).parent)data_path = Path(os.path.join(os.path.splitext(filepath)[0]))data = prepare_data(data_path, {0:'Pool'}, batch_size=32)

data.classes['background', 'Pool']

Visualize training data

To get a sense of what the training data looks like, arcgis.learn.show_batch() method randomly picks a few training chips and visualize them.

%%time

data.show_batch()CPU times: user 3.43 s, sys: 1.14 s, total: 4.57 s Wall time: 26.5 s

Load model architecture

Here we use Single Shot MultiBox Detector (SSD) [1], a well-recognized object detection algorithm, for swimming pool detection. A SSD model architecture using Resnet-34 as the base model has already been predefined in ArcGIS API for Python, which makes it easy to use.

Architecture of a convolutional neural network with a SSD detector [1]

ssd = SingleShotDetector(data, grids=[5], zooms=[1.0], ratios=[[1.0, 1.0]])Train a model through learning rate tuning and transfer learning

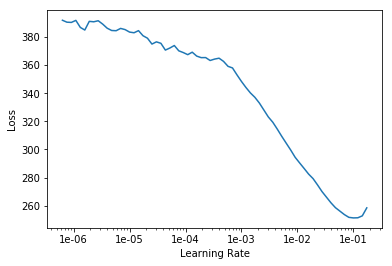

Learning rate is one of the most important hyperparameters in model training. Here we explore a range of learning rate to guide us to choose the best one.

ssd.lr_find()

Based on the learning rate plot above, we can see that the loss starts going down from 1e-4. Therefore, we set learning rate to be a range from 1e-4 to 3e-3, which means we will apply smaller rates to the first few layers and larger rates for the last few layers, and intermediate rates for middle layers, which is the idea of transfer learning. Let's start with 10 epochs for the sake of time.

ssd.fit(10, lr=slice(1e-3, 3e-2))| epoch | train_loss | valid_loss |

|---|---|---|

| 1 | 187.207718 | 117.501472 |

| 2 | 127.923302 | 100.232674 |

| 3 | 101.859406 | 132.383286 |

| 4 | 90.191681 | 76.736732 |

| 5 | 81.827988 | 70.427483 |

| 6 | 78.113594 | 71.897575 |

| 7 | 73.488182 | 69.986481 |

| 8 | 72.718140 | 66.302582 |

| 9 | 72.288734 | 100.715378 |

| 10 | 71.455414 | 140.783173 |

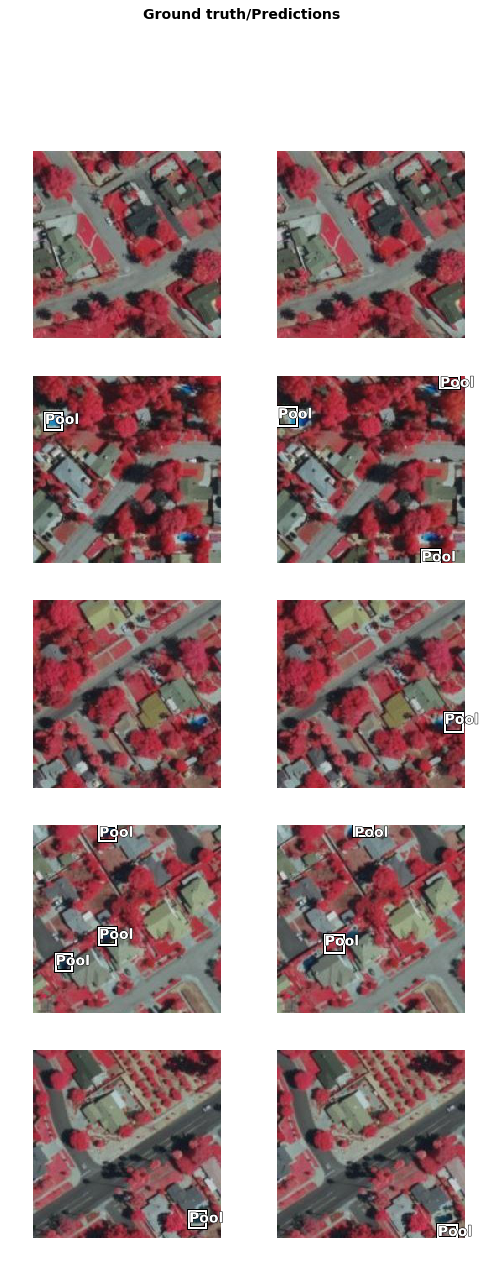

Detect and visualize swimming pools in validation set

Now we have the model, let's look at how the model performs. Here we plot out 5 rows of images and a threshold of 0.3. Threshold is a measure of probablity that a swimming pool exists. Higher value meas more confidence.

ssd.show_results(thresh=0.3)

As we can see, with only 10 epochs, we are already seeing reasonable results. Further improvment can be acheived through more sophisticated hyperparameter tuning. Let's save the model for further training or inference later. The model should be saved into a models folder in your folder. By default, it will be saved into your data_path that you specified in the very beginning of this notebook.

ssd.save('5x5-10-deploy', publish=True, gis=gis)Created model files at C:\Users\Admin\AppData\Local\Temp\detecting_swimming_pools_using_satellite_image_and_deep_learning\models\5x5-10-deploy Published DLPK Item Id: 85bf36e8c5e24d6da21420e4f89f5ea7

WindowsPath('C:/Users/Admin/AppData/Local/Temp/detecting_swimming_pools_using_satellite_image_and_deep_learning/models/5x5-10-deploy')Part 3 - deployment and inference

detect_objects_model_package = gis.content.get('85bf36e8c5e24d6da21420e4f89f5ea7')

detect_objects_model_packageNow we are ready to install the mode. Installation of the deep learning model item will unpack the model definition file, model file and the inference function script, and copy them to "trusted" location under the Raster Analytic Image Server site's system directory.

detect_objects_model = Model(detect_objects_model_package)detect_objects_model.install(gis=gis)Submitted. Executing... InstallDeepLearningModel GP Job: jd496c532b0e7471cb27a01c788847582 finished successfully.

'[resources]models\\raster\\85bf36e8c5e24d6da21420e4f89f5ea7\\5x5-10-deploy.emd'

detect_objects_model.query_info(gis=gis){'Framework': 'arcgis.learn.models._inferencing',

'ModelType': 'ObjectDetection',

'ParameterInfo': [{'name': 'raster',

'dataType': 'raster',

'required': '1',

'displayName': 'Raster',

'description': 'Input Raster'},

{'name': 'model',

'dataType': 'string',

'required': '1',

'displayName': 'Input Model Definition (EMD) File',

'description': 'Input model definition (EMD) JSON file'},

{'name': 'device',

'dataType': 'numeric',

'required': '0',

'displayName': 'Device ID',

'description': 'Device ID'},

{'name': 'padding',

'dataType': 'numeric',

'value': '0',

'required': '0',

'displayName': 'Padding',

'description': 'Padding'},

{'name': 'threshold',

'dataType': 'numeric',

'value': '0.5',

'required': '0',

'displayName': 'Confidence Score Threshold [0.0, 1.0]',

'description': 'Confidence score threshold value [0.0, 1.0]'},

{'name': 'nms_overlap',

'dataType': 'numeric',

'value': '0.1',

'required': '0',

'displayName': 'NMS Overlap',

'description': 'Maximum allowed overlap within each chip'},

{'name': 'batch_size',

'dataType': 'numeric',

'required': '0',

'value': '64',

'displayName': 'Batch Size',

'description': 'Batch Size'}]}Model inference

To test our model, let's get a raster image with some swimming pools.

out_objects = detect_objects(input_raster=naiplayer.url,

model=detect_objects_model,

output_name="pooldetection_full_redlands",

context={'cellSize': 0.42, 'processorType': 'GPU'},

gis=gis)

out_objectsVisualize detected pools on map

result_map = gis.map('Redlands, CA')

result_map.basemap='satellite'

result_map.add_layer(out_objects)

result_map

Conclusion

In thise notebook, we have covered a lot of ground. In part 1, we discussed how to export training data for deep learning using ArcGIS python API and what the output looks like. In part 2, we demonstrated how to prepare the input data, train a object detection model, visualize the results, as well as apply the model to an unseen image using the deep learning module in ArcGIS API for Python. Then we covered how to install and publish this model and make it production-ready in part 3.

References

[1] Wei Liu, Dragomir Anguelov, Dumitru Erhan, Christian Szegedy, Scott Reed, Cheng-Yang Fu: “SSD: Single Shot MultiBox Detector”, 2015; arXiv:1512.02325.